New CIMSS Tornado Model to simulate damages and losses

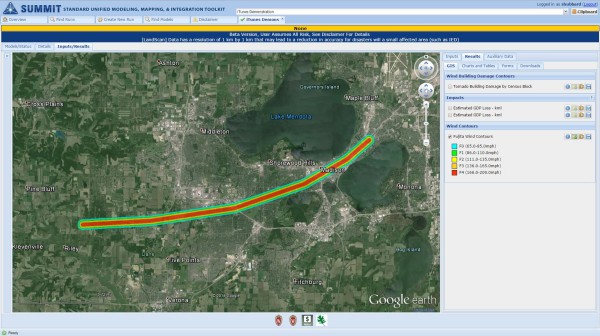

A new tornado model allows users to interactively create the tornado path using an interface based on Google Earth. The model then calculates the damages and losses of the simulated tornado. Credit: Shane Hubbard, CIMSS.

Preparation, plain and simple, is the key to getting through severe weather events. Knowing what to do and when can make the difference in minimizing, and even preventing, property damage and fatalities. For emergency managers that means engaging in tabletop or full-scale exercises to test their responses and ensure that they are ready to handle any weather situation.

Until recently, though, something had been missing: easy access to a publicly available tornado model.

“For some hazards geospatial modeling tools are available to model the hazard magnitude and location. There are even fewer models that can use the hazard information to calculate the potential damages and losses from those events. A publicly available tornado model that creates a tornado path does not exist, nor is there a model that can estimate the losses from a tornado event,” said Shane Hubbard, a scientist with the Cooperative Institute for Meteorological Satellite Studies.

For emergency managers, modeling potential damage is a critical component of testing their ability to respond effectively in the event of a real tornado – thinking about what resources to send, as well as where and when to send them.

Hubbard, with help from CIMSS programmer Tommy Jasmin, has changed the modeling landscape by producing the Tornado Model, which has been delivered and will be included in the Department of Homeland Security’s (DHS) Standard Unified Modeling, Mapping, and Integration Toolkit (SUMMIT).

Four years ago Sandia National Laboratories contacted Hubbard seeking outside expertise in modeling tornadoes, floods, and dam breeches. Sandia was supporting the Federal Emergency Management Agency (FEMA) and the DHS in developing national-level exercises to simulate large-scale events. Such simulations allow the departments to evaluate their preparedness.

After helping Sandia on an ad hoc basis with modeling a variety of tornado scenarios, Hubbard offered to create a tornado model that could be customized by the user. Not long after Hubbard arrived at CIMSS in 2013, Sandia had secured funding through DHS, specifically through the Resilient Systems Division of the DHS Science and Technology Directorate, to begin developing that model.

“With this model, the goal is to allow communities to create plans and prepare for tornadoes,” said Hubbard.

Initially, Hubbard was writing the code to run the model, but soon enlisted Jasmin because of his programming experience.

“The SUMMIT modeling architecture required that the model be developed leveraging libraries that were new to the (Space Science and Engineering) Center. Given Tommy’s background in GIS and Java and some of these key concepts that are really important for this software, I quickly identified him as a person who would have the background to learn and implement this new library,” said Hubbard.

Hubbard was impressed with Jasmin’s rapid mastery of this unfamiliar geospatial language library known as GeoTools. He described it as a “huge asset for the Center going forward” as this specialized knowledge could mean more independence from licensed software and an increased flexibility in scripting programs useful for the Center’s research missions. In addition, models using this library can produce faster results, allowing for real-time capabilities in the future.

Although Jasmin was now onboard, other obstacles to developing the model remained. Those obstacles were literally buildings in the path of the possible tornado. Including the location of buildings as a layer in the model is crucial to its ability to estimate damage and losses, thereby helping the user recognize the effects of tornado winds on property.

“One of the difficult things is that there is no national layer of building inventory… a layer representing where all the buildings are,” Hubbard said.

Fortunately, FEMA’s HAZUS-MH software – a damage and loss estimation software for hurricanes, floods, and earthquakes – has developed something approximating a building inventory, using U.S. Census data (which shows concentration of people) and Dun and Bradstreet data (which shows where businesses are) among other types of data. Combining that information with a simulated tornado path in the Tornado Model allows the user to calculate a damage estimate.

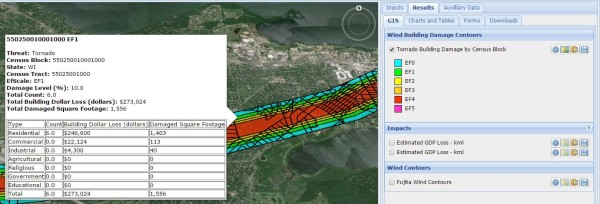

The tornado model allows users to select areas along the simulated tornado’s path to see the estimated damages and losses. Credit: Shane Hubbard, CIMSS.

To produce that estimate, the model uses results from previous research to help create realistic damage curves. As an example, Jasmin noted their use of typical wind-speed levels on the Fujita Scale for designating tornadoes and their damages. Hubbard mentioned that they were also using an “idealized vortex model” originally developed in the 1950s to simulate the tornado’s path; despite the model’s age, its equations continue to be proven valid.

The tornado model uses this information, along with inputs from the user to output interactive maps, as well as graphs and charts.

While that process might sound simple, Hubbard and Jasmin worked hard to develop a user interface that would be easy enough for anyone to use, regardless of their technical background. For example, less technical users can use the default average wind speed and average width based on historical averages for the strength of the tornado (say an F2) that they select for their simulation, while more advanced users can specify a particular wind speed and width for that F2 tornado.

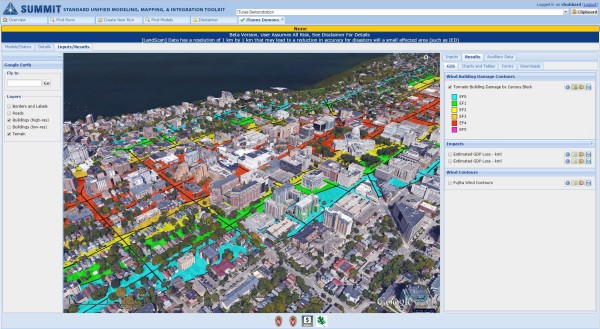

As part of SUMMIT, the Tornado Model, which consists of a tornado path model and a building damage model created by Jasmin and Hubbard, also leverages population and economic effects models created elsewhere. The Model also includes an interface created by Sandia National Laboratories using Google Earth that allows the user to interactively create the tornado path.

The model produces an interactive map that allows users to look at building damages along the tornado path by Census Block, estimating the number of buildings impacted and the estimated loss in dollars and square footage within a very specific area. The tornado path is semi-transparent so that the Google Earth map remains visible underneath, making features of the affected community more readily recognizable.

A close-up view of the tornado path and the buildings the model has calculated would be affected by the simulated tornado. Credit: Shane Hubbard, CIMSS.

Alternatively, users can examine the model results in a table within SUMMIT, looking at damage and loss totals, or export the data to a spreadsheet outside the toolkit.

While the model is in beta testing within SUMMIT, Hubbard is already looking ahead to future improvements. Soon users will have the ability to upload information about their actual building inventory, rather than relying on the inventory provided by the model. Hubbard is also considering updating the current, simplistic damage curves based on the North Texas Council of Governments estimates of damage, which currently are the best publicly available version that suits the model’s needs.

Another possible extension of the model is allowing users to access historic tornado data through the National Weather Service Storm Prediction Center. As Hubbard noted, past events may repeat themselves, making it worthwhile to study them. Users could see the present-day impacts of a past tornado track; an area may have seen a marked increase in development, meaning more buildings that could be affected, not to mention the likely increases in property values over time, meaning significantly higher damages and losses.

In addition, Hubbard is interested in gaining additional field data from tornadoes to further validate and improve the model.

Hubbard has also begun investigating use of the model in real-time during an active tornado to estimate damage and loss. Such an application certainly fits his history and interest in emergency response. But more than that, he is eager to inject new research and science into the project – seeking to make the end product more useful to emergency managers, responders, and others.

by Leanne Avila